Across multiple AI programs, a consistent pattern emerges. success in controlled environments, failure at scale. Pilots work. Demos impress. Models perform.

And then somewhere between experimentation and production, momentum fades.

Not because organizations lack ambition.

Not because teams lack capability.

But because building models is not the same as building systems that can sustain intelligence in real-world conditions.

This gap is where most AI transformations quietly break down.

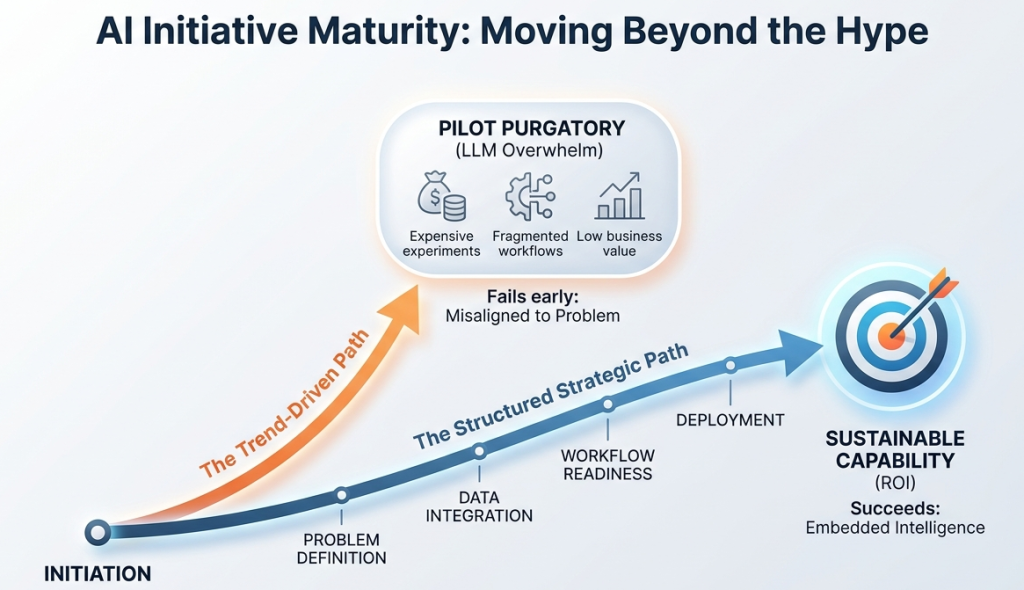

AI Initiative Maturity: Moving Beyond the Hype

Most organizations plateau before operational maturity—not because of models, but because the surrounding systems are not designed for scale.

Where Things Actually Begin to Break

When AI initiatives don’t deliver, the conversation usually focuses on models, tools, or algorithms.

In practice, the failure starts much earlier—and unfolds gradually.

1. It Starts With the Wrong Question — And Everything Follows From It

Most AI journeys begin with:

“Where can we use AI?”

It sounds reasonable. It is also the wrong starting point.

This approach typically comes from teams with strong business or systems understanding—but limited exposure to how intelligence systems behave in production.

So AI gets layered onto existing workflows.

The result:

incremental improvement at best, structural mismatch at worst.

AI does not simply optimize decisions.

It often changes how decisions should be made.

Without reframing the problem itself, even well-built models struggle to create meaningful impact.

2. The Quiet Trap of Trend-Driven AI

In early stages, teams tend to converge on what is visible:

- LLMs

- Copilots

- Agent-based solutions

Not necessarily because they are the right fit—but because they are the most discussed.

This creates a subtle but powerful shift:

decisions follow trends instead of problem structure.

What gets missed:

- When is classical ML sufficient?

- When is a sequential decision framework required?

- What does “agentic” actually mean in an operational context?

Without this grounding, architecture choices become reactive rather than intentional.

3. Data Doesn’t Fail Loudly — It Erodes Outcomes

In early pilots, data appears sufficient.

Models train. Metrics look promising.

Then production begins.

Within months, underlying issues surface:

- Fragmentation across systems

- Inconsistent definitions

- Missing context

- Data that is technically available but not operationally usable

A significant portion of effort shifts to fixing data midstream—slowing progress and diluting outcomes.

Data rarely causes immediate failure.

It gradually reduces system reliability until trust breaks.

4. The System Becomes the Bottleneck

Even when models perform well, integration becomes the constraint.

AI does not operate in isolation. It sits inside:

- Legacy systems

- Business workflows

- Latency-sensitive environments

- Governance and compliance layers

At this point, friction emerges:

- Real-time expectations vs batch architectures

- Model outputs vs decision ownership

- Intelligence vs existing process rigidity

The challenge is no longer model quality.

It is whether the surrounding system can absorb and act on intelligence consistently.

5. MLOps Is Where Ambition Meets Reality

Most AI strategies implicitly assume a smooth path from model development to deployment.

In reality, this is where complexity compounds:

- Versioning models and data together

- Monitoring performance drift

- Managing retraining cycles

- Aligning engineering, data, and business ownership

What worked once does not necessarily work repeatedly.

Without a robust operational backbone, AI remains stuck in a cycle of isolated successes.

6. Scale Transforms Everything — Especially Cost

What works at small scale often breaks under real-world load.

As systems scale:

- Inference costs rise

- Latency constraints tighten

- Infrastructure complexity increases

- Trade-offs between accuracy, speed, and cost become unavoidable

The problem shifts from:

“Can we build this?”

to:

“Can we sustain this economically and operationally?”

This transition is where many initiatives stall.

Moving From Models to Systems

Most organizations are not failing at AI.

They are failing at operationalizing intelligence.

That requires a shift in perspective:

- From models → systems

- From predictions → decisions

- From isolated use cases → integrated capabilities

As AI evolves, the center of gravity is moving toward:

- Decision intelligence

- Reinforcement learning

- Multi-agent systems

These are not incremental extensions of current approaches.

They require fundamentally different ways of thinking about architecture, control, and adaptation.

Closing Thought

The challenge ahead is not building better models.

It is building systems that can make, adapt, and sustain decisions at scale.

That is a significantly harder problem.

And most organizations are still at the beginning of that journey.